Hello!

I have been tinkering with some AI tools, specifically NeRF (instant-NGP) and Disco Diffusion. Tons of fun to get it to work, astonishing to see the results!

Especially NeRF is potentially a very powerful ‘photogrammetry’ tool. Using Nvidia’s ai routines it is possible to create a 3D volume of a space that allows for capturing reflection, transparency and accurate lighting.

The tools are quite rough atm but development is accelerating.

I made this by walking around for 1 minute, recording a video. Then I took 1 out of 24 frames to feed into the ai and after 5 minutes of processing the volume is ready. Then I can export a movie with a simple camera path, all inside the same software… Of course I would love to be able to use these volumes for use in other VFX applications! I can export this volume by making slices, the example below is a volume of 512^3 pixels. The resolution is just enough to make out certain features such as the hut in the middle. I made this in Fusion.

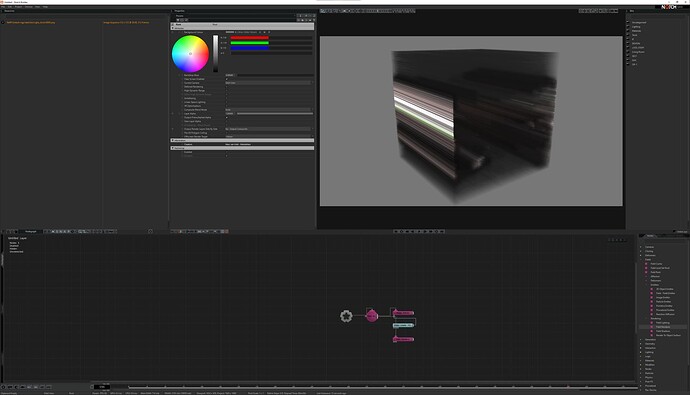

I tried creating the volume inside Notch but I can’t get it done quite right.

The entire field is filled with the same frame (going through the 512 frame sequence at 30 fps). Is it possible to have the slices display consecutive frames (and keep these static)? I checked out the field documentation but can’t find anything on this… Also YT tutorials didn’t provide an answer.

What I actually would really love to see is native support for these volumes inside Notch. Since Notch was one of the first softwares to support some other Nvidia ai tech I am hoping that this tech might be adopted to be used inside Notch. It would unlock lots of possibilities for artists and technicians.